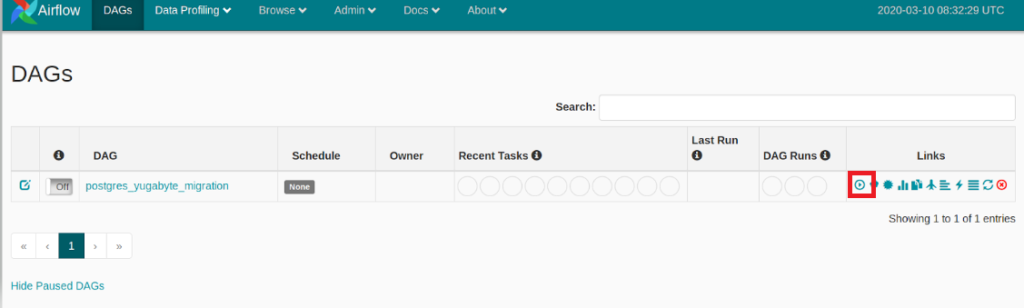

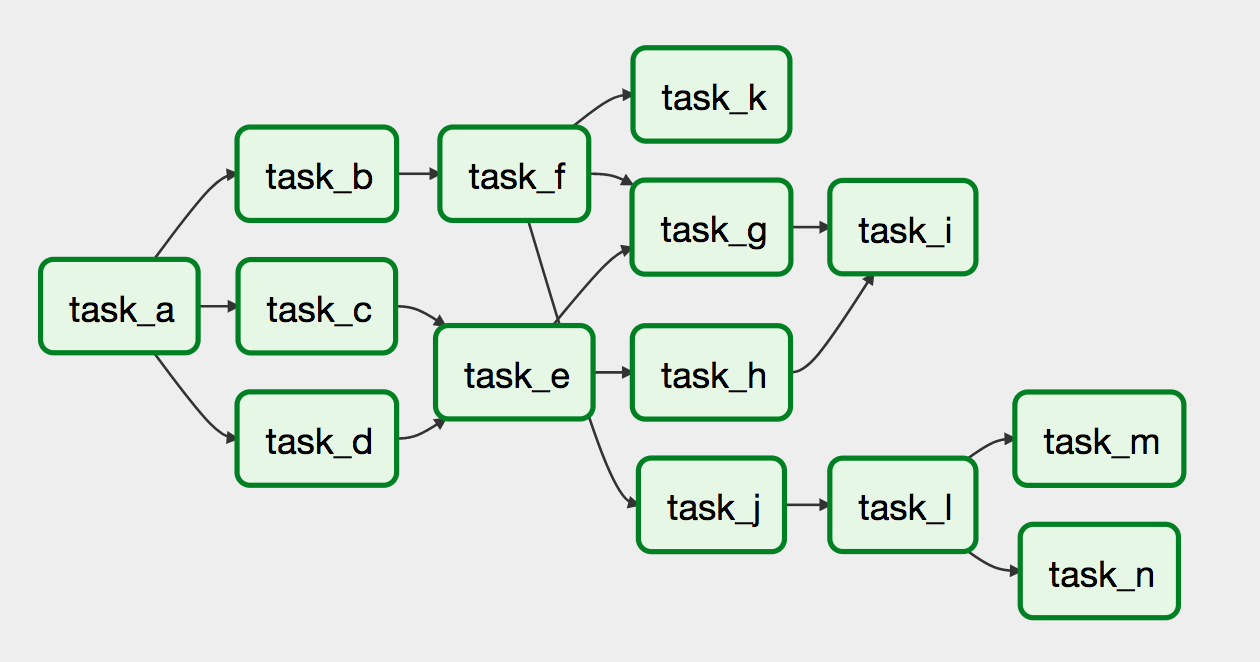

Apache Airflow Variables can be set both programmatically using Python library, through the command line, or from Apache Airflow UI. AWS S3 or a database could be used among other options to store these values. It is important to note that using them is not the only solution. These variables are a valuable resource but are often overlooked in people’s designs. Design overviewĪpache Airflow Variables were used to achieve this functionality. In this article, I will provide the steps to achieving this functionality. It does not only have to change the number of tasks it spins up, but also create different types of tasks based on each record.Īn Example of DAG with a dynamic workflowĪs you can see there are more tasks as compared to when the DAG first started. Maybe the next time when DAG runs, it only gets one record back and so in that run we will only have one task spun up.

For example, the DAG starts, and its first task runs some query that returns five records from a database, and if we want five distinct tasks to process each of those records, we could dynamically spin up five tasks to do that. depends on a trigger event.Ī dynamic workflow in Apache Airflow means that we have a DAG with a bunch of tasks inside it and depending on some outside parameters (that are unknown at the time the DAG starts), it will sometimes create different amounts and/or types of tasks. Let’s say we want to create a new DAG dynamically where the content of the DAG including what kind of tasks to peform (Operators) and scheduling of the workflow etc. This gives us enormous flexibility and allows us to use Apache Airflow whenever needed. One of the core features that makes it so powerful is that all workflows are defined with code. Customer IssueĪpache Airflow is an amazingly powerful open-source workflow tool that has been picking up steam in the past few years. When workflows are defined as code, they become easier to maintain, version, test and collaborate on. The rich user interface makes it easy to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed. Rich command line utilities make performing complex surgeries on DAGs a snap. The Apache Airflow scheduler executes your tasks on an array of workers while following the specified dependencies. It is used to author workflows as directed acyclic graphs (DAGs) of tasks. Apache Airflow is a platform to programmatically author, schedule, and monitor workflows.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed